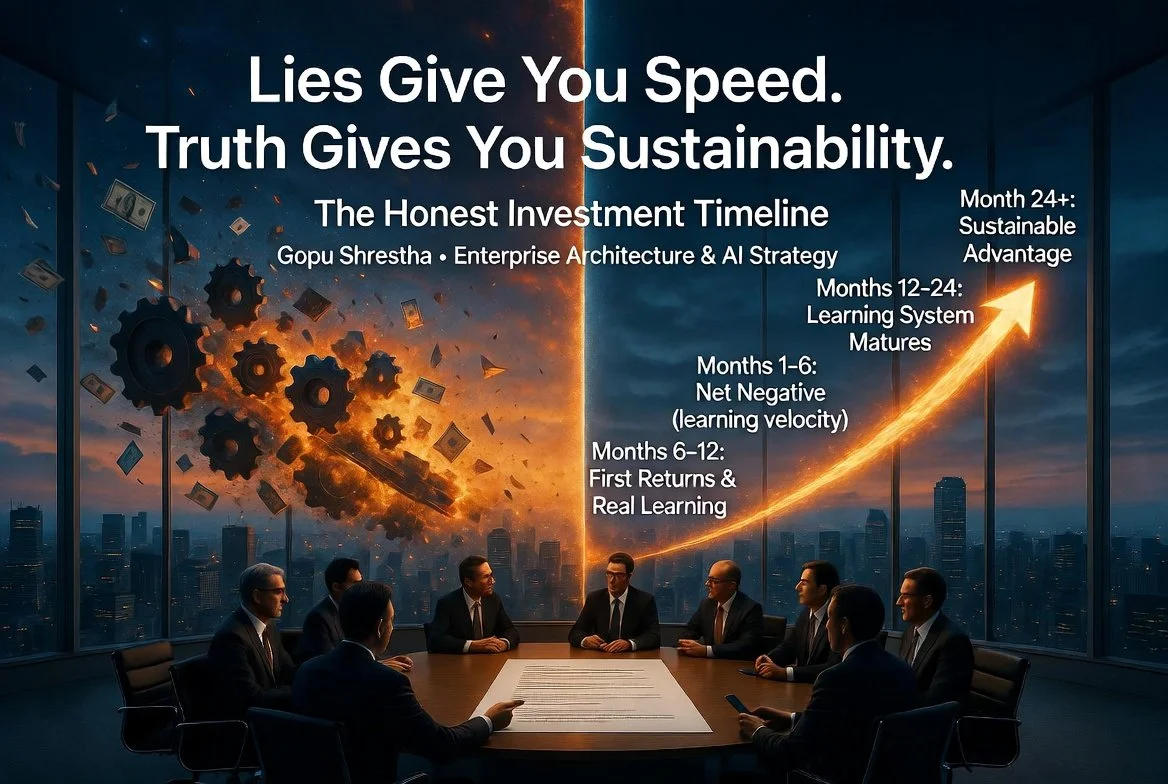

Lies Give You Speed. Truth Gives You Sustainability.

Gopu Shrestha · Enterprise Architecture & AI Strategy ·

The Two Ways to Waste Money on AI

There are two ways to waste money on AI.

The first is to move too slowly. Competitors learn faster, your best talent migrates toward organizations genuinely engaging with the technology, and the technical debt of delayed decisions compounds. This failure mode is real.

The second is to move fast on dishonest foundations. To deploy without honest assessment of data quality. To commit without honest understanding of production constraints. To report activity as though it were outcomes.

Organizations that write off two to fifteen million dollars in failed AI initiatives typically did not fail because they were slow. They failed because they were fast on top of fragmented data, misaligned governance, and vendor-demo assumptions that were never tested against reality.

“Lies give you speed. Truth gives you sustainability. The organizations that chose truth in month one looked slower. They weren’t. They were running a different race.”

Most organizations believe they are avoiding the first failure mode. Most are executing the second. This article will help you see the difference—and build a business case that survives contact with reality.

The Honest Investment Timeline

When working with executive teams on AI business cases, the most important reframe I offer is this: the question is not whether to invest, or how much. The question is what the investment actually buys at each phase of a genuinely honest timeline.

Months 1–6: Net Negative (and That’s Correct)

Infrastructure, team formation, data quality work, and upskilling. There is no business ROI in this phase. The value metric is learning velocity—how quickly the organization is building the capability to assess what is actually possible.

Any business case that promises returns in this window is measuring aspiration, not evidence.

Months 6–12: First Returns and Real Learning

The first models reach production. The organization discovers the difference between what worked in the pilot and what works at scale. This phase produces the most valuable organizational learning in the entire program—including what the program should not have committed to.

Months 12–24: The Learning System Matures

Genuine compounding begins. The organization develops the judgment to distinguish experiments worth continuing from those that should have been killed earlier.

Month 24+: Where Sustainable Advantage Actually Lives

The organization that has invested honestly begins to see the returns that twelve-month business cases promised but could not deliver. This is not slow. This is a different race.

Four Questions Every AI Initiative Must Answer Honestly

Before committing significant investment, these four questions must be answered with production data—not demonstration data. Most organizations skip this work, not out of negligence, but because the questions are designed to surface uncomfortable answers.

1. Does This Model Actually Work With Our Data?

Vendor demonstrations are built on clean, carefully curated data. Your organization’s data is not that. It has gaps, inconsistencies, legacy structures, and regulatory constraints the vendor demo never encountered.

The consistent pattern: organizations invest millions in AI systems without addressing data quality first. An inventory prediction system underperforms existing tools because foundational data issues were never resolved. This is not a technology failure. It is a honesty failure.

2. Will Our Users Actually Adopt It?

There is a consistent gap between early adopter adoption and mainstream adoption that AI initiatives routinely underestimate. A pilot that succeeds with technically sophisticated early adopters will not automatically succeed with the broader user population.

Healthcare organizations frequently deploy AI tools based on scenarios that don’t reflect production complexity. When launched to real users—not the pilot cohort—these systems fail to handle actual variability, leading to abandoned implementations and organizations that spent on technology but gained none of the value.

3. What Is the Real Implementation Cost in Our Environment?

The integration cost in a regulated enterprise is almost always higher than the vendor’s estimate, and almost never accounted for in the initial business case. Legacy systems, compliance requirements, security constraints, organizational friction.

A model without monitoring is not complete. It is a liability. The definition of “done” for an AI initiative must include: performance thresholds met on production-representative data; fairness audit completed; monitoring and drift detection configured; rollback plan documented and tested; retraining pipeline verified; business impact measurement instrumented. Any business case missing these items is not accounting for the real cost.

4. What Compliance Issues Will Emerge in Production?

In regulated industries—financial services, healthcare, any domain where AI decisions affect people’s rights, safety, or access to services—the compliance questions that didn’t arise in procurement will arise in production.

The EU AI Act, now taking effect in stages, establishes that high-risk AI systems face strict documentation, human oversight, and post-market monitoring requirements. Fines for non-compliance reach six to seven percent of global turnover—at or above GDPR levels. Organizations deploying AI at speed without mapping against this governance landscape are accumulating exposure that won’t appear on a dashboard until it is a crisis.

“In regulated industries, the gap between AI enthusiasm and AI governance isn’t a communication problem. It’s a risk management problem.”

The Antifragile Alternative

There is a specific organizational capability that separates companies that will sustain AI transformation from those that will cycle through expensive initiatives without compounding returns. I call it antifragility—and it is built, not bought.

The antifragile organization does not merely survive volatility. It gains from it. Failed experiments produce learning worth more than the cost of the experiment. Honest uncertainty is valued over performative certainty. The leader who terminates an AI initiative that is not working is recognized, not penalized.

This capability is not technical. It is the product of years of building governance discipline, psychological safety, and measurement systems that make honesty possible under pressure. It is what I mean when I argue that integrity is infrastructure—not a value statement, but a prerequisite for the kind of organizational learning that compounds over time.

The Conversation Worth Having Before the Business Case

The most valuable thing an honest leader can offer an executive team is not a more optimistic projection. It is an honest phasing model—one that tells the board exactly when to expect what, and why the first six months look like cost before they look like return.

Instead of: “We will see 3x ROI in twelve months.”

Say: “In the first six months, we are building the capability that generates sustainable returns—and answering four questions that determine whether the next five million is a good investment.”

Then walk them through the four questions above, with realistic timelines and measurable checkpoints.

This is not a pessimistic framing. It is an accurate one. And accuracy is the only framing that survives contact with reality.

Credibility is a compounding asset. Every business case anchored to honest foundations builds the account that future initiatives draw on. Every projection built on vendor demos and optimistic assumptions is a withdrawal—invisible in the short term, costly at the moment of reckoning.

Choose the finish line, not the head start.

📋 HONESTY CHECKPOINT: Review your current AI business case. How many of the projected benefits have been validated through internal experimentation with your actual data, your actual users, and your actual infrastructure? If fewer than half, the case represents aspiration rather than evidence. That is not a failure—it is a starting point. The question is whether you name it before the investment, or discover it after.

Where in your organization is the gap between the business case and the production reality widest right now? And what would it take to say that out loud in a leadership meeting?

Gopu Shrestha is an enterprise architect and published author working at the intersection of strategic honesty, integrity-centered leadership, and AI transformation in regulated industries.